- 304 Posts

- 14 Comments

4·5 months ago

4·5 months agoProbably from the FAQ pane on the Kickstarter page:

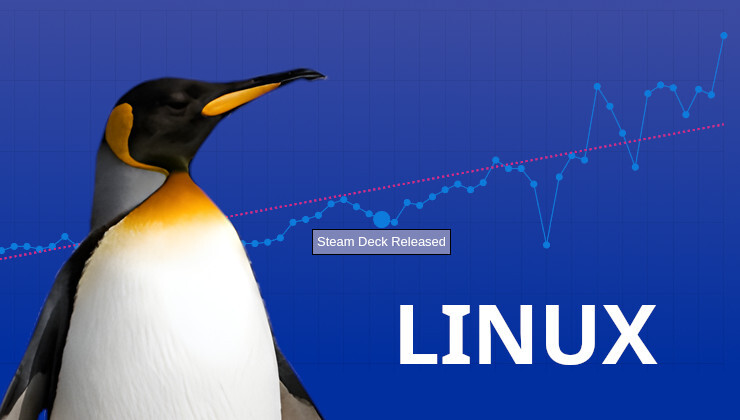

What about Steamdeck support?

Will be 100% supported

Last updated: Tue, April 23 2024 10:55 AM PDT

Have people actually checked the versions there before making the suggestion?

F-Droid: Version 3.5.4 (13050408) suggested Added on Feb 23, 2023

Google Play: Updated on Aug 27, 2023https://f-droid.org/en/packages/org.videolan.vlc/

https://play.google.com/store/apps/details?id=org.videolan.vlcThe problem seems to be squarely with VLC themselves.

121·9 months ago

121·9 months agoThe was a GNOME FAQ that describes “guh-NOME” or IPA /ɡˈnəʊm/ as the official pronunciation, due to the emphasis of G as GNU. It does acknowledge that many pronounce it “NOME” or /nəʊm/: https://stuff.mit.edu/afs/athena/astaff/project/aui/html/pronunciation.html

9·10 months ago

9·10 months agoUndervolting provides the chip with additional power and thermal headroom, and can improved situations where otherwise throttling sets in.

41·11 months ago

41·11 months agoYes. But one should also note that only a limited range of Intel GPU support SR-IOV.

4·11 months ago

4·11 months agoFrom https://www.gamingonlinux.com/2023/10/tony-hawks-pro-skater-1-2-adds-offline-support-for-steam-deck/ :

You may be able to get it to work on desktop Linux too in offline mode by using

SteamDeck=1 %command%as a Steam launch option for the game, which likely won’t work for Windows since the Steam Deck is just a Linux machine.

Tom Clancy’s The Division 2 runs decently on the Steam Deck, and has semi-(?)/de-facto-(?) official support (the developer purposefully switched to a Linux/Wine-compatible EAC earlier this year, and referenced the Steam Deck support in the corresponding patch note).

1·1 year ago

1·1 year agoThis summary (and sadly, also the GoL title) has somewhat buried the lede here: The firmware update that comes with 3.5.1 Preview adds undervolting controls — with the obvious implications of improving the battery life.

37·1 year ago

37·1 year agoWhat you suggested cannot be further from the truth. The paper that got her the Nobel was from 2005 (https://www.cell.com/immunity/fulltext/S1074-7613(05)00211-6, see also cited in https://www.nobelprize.org/prizes/medicine/2023/press-release/), and UPenn claimed in 2013 — at least 7 years later — that she would not be “of faculty quality” (https://www.wired.co.uk/article/mrna-coronavirus-vaccine-pfizer-biontech).

4·1 year ago

4·1 year agoThe release notes describe changes in multi-threading, and there appears also to be changes in the graphics stack.

10·1 year ago

10·1 year agoNote that Lawrence Yang said in March “a true next-gen Deck with a significant bump in horsepower wouldn’t be for a few years.” (https://www.rockpapershotgun.com/the-community-continues-to-blow-our-minds-valve-talk-the-steam-deck-one-year-on)

1·1 year ago

1·1 year agoThe novel bit of this project is actually the usage of GGML quantization from llama.cpp for Stable Diffusion, which can offer lower RAM usage and faster inference on CPU than all the previous CPU implementations without the benefit of low bit quantization, which was known to make CPU and low RAM LLaMA inference feasible.

The important long term implication is that people have been targeting the incorrectly sized Stable Diffusion model, if the goal is quality on commodity hardware (this includes GPU, too). For example, Stable Diffusion where Stability AI has gloated so much how it fits commodity hardware is slightly less than 1 billion parameters. The smallest LLaMA that people nowadays can happily run on commodity GPU or CPU is already 7 billion parameters. And even OpenAI’s DALL·E 2, which many called prohibitive because “you need a 48 GB GPU” (which is not true, with quantization), is just 3.5 billion parameters.

For additional context, Stable Diffusion using CPU has been done before, though with repurposed frameworks rather than a custom C++ project. Notably, there has been a Q-Diffusion paper (https://github.com/Xiuyu-Li/q-diffusion), but the result was obtained by simulating the quantization, and e.g. the GitHub repo not actually offer an implementation with actual speed-up.

1·1 year ago

1·1 year agoThere are unboxing videos out there showing that the carrying case is included: https://www.youtube.com/watch?v=QcW-p5ZbSuc

Unless Valve can either find or pay a company that does a custom packaging of a Nvidia GPU with x86 (like the Intel Kaby Lake-G SoC with an in-package Radeon), very unlikely. The handheld size makes an “out of package” discrete GPU very difficult.

And making Nvidia themselves warm up to x86 is just unrealistic at this point. Even if e.g. Nintendo demanded, the entire gaming market — see AMD’s anemic recent 2024Q1 result from gaming vs. data center and AI — is unlikely to be compelling enough for Nvidia to be interested in x86 development, vs. continuing with their ARM-based Grace “superchip.”